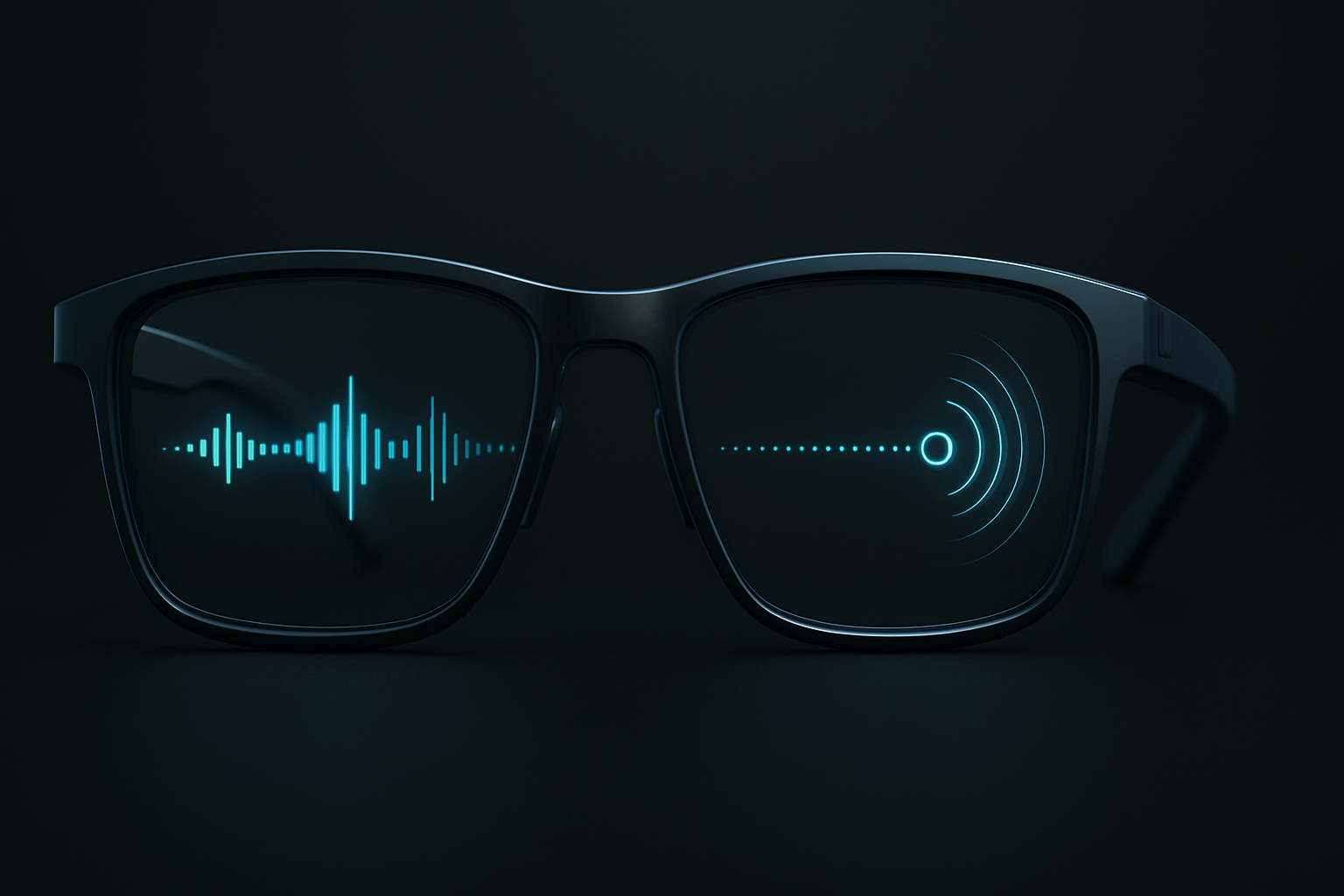

Apple Smart Glasses Voice AI Interface

April 13, 2026

Apple is building smart glasses. Not a half-baked concept, not a developer beta — actual consumer frames with cameras, speakers, and a voice-first interface aimed at a 2026 launch. According to reports from April 12, Apple is working on multiple frame styles and a unique camera design, with the foldable iPhone also on track for September despite earlier production fears.

This matters for one simple reason: smart glasses need voice input that actually works.

Why Voice Is the Only Real Interface for Glasses

When you are wearing something on your face, you cannot look down at a keyboard. You cannot tap a touchscreen. The only hands-free, eyes-free input method is your voice.

That is not a new insight. But the execution has always fallen short. Early voice assistants were too slow, too inaccurate, and too awkward for real work. "Hey Siri, send that email" is fine for a novelty. It is not how professionals get things done.

The problem has always been latency and accuracy. By the time a voice system transcribes what you said, processes it, and gives you feedback, you have lost the flow. The promise of "just speak and it happens" stays just out of reach.

What DictaFlow Brings to the Table

DictaFlow is built around a simple idea: your voice should produce text as fast as your fingers can, with the accuracy to match. For smart glasses wearers who need to take notes, draft messages, or capture ideas without breaking stride, that is not a nice-to-have — it is the whole value proposition.

Key features that map directly to the smart glasses use case:

- Hold-to-Talk (PTT): Press a button, speak, release. The transcription happens immediately. No wake word confusion, no ambient noise misinterpretation. This maps perfectly to glasses — one physical gesture or a tap on the frame triggers input.

- Mid-Sentence Correction: Make a mistake halfway through a thought and correct it naturally. "Set the meeting for Tuesday no wait Thursday at three." DictaFlow handles that gracefully. Most voice systems do not.

- Cross-Platform Support: Apple smart glasses will talk to Windows PCs, MacBooks, and iPhones through Bluetooth. DictaFlow runs natively on Windows and Mac, giving users a consistent voice input layer regardless of which device is in the loop.

The VDI Factor Nobody Is Talking About

Here is something the smart glasses hype cycle is missing: a large chunk of professional workers do not sit at a local machine. They run Windows inside Citrix or RDP sessions. The virtual desktop infrastructure that powers hospitals, law firms, and enterprises was never designed for voice input latency under 300ms.

DictaFlow is designed to work in those environments. When your coworkers put on smart glasses and try to dictate into a remote Windows session, they will hit the wall immediately. DictaFlow does not.

The Bottom Line

Apple's smart glasses are not just a new device category — they are a bet that voice will finally be a first-class input method for everyday computing. If that bet pays off, the apps and tools that matter most will be the ones that handle voice input fastest and most accurately.

DictaFlow is built for exactly that moment. Whether you are wearing glasses, sitting at a desk, or working through a Citrix session, the principle is the same: your voice should produce text at the speed of thought.

Related DictaFlow Guides

Explore the pages built for the exact workflows these posts keep touching: Windows dictation, Citrix/VDI, medical documentation, legal drafting, and side-by-side comparisons.

Ready to stop typing?

DictaFlow is the only AI dictation tool built for speed, privacy, and technical workflows.

Download DictaFlow Free